Oracle Database 11g Release 2 (11gR2) makes it easier than ever to isolate and identify potential root causes of poor I/O performance. This article focuses on the various Oracle and non-Oracle tools to generate sample workloads to provide sufficient metrics for detection and eventual resolution of performance bottlenecks within the database’s underlying input/output (I/O) subsystem.

Synopsis. Tuning the I/O performance of an Oracle 11gR2 database is still just as much art as science. Oracle 11g Release 2 (11gR2) makes it easier than ever, however, to isolate and identify potential root causes of poor I/O performance. This article – the second in this series – focuses on the various Oracle and non-Oracle tools to generate sample workloads to provide sufficient metrics for detection and eventual resolution of performance bottlenecks within the database’s underlying input/output (I/O) subsystem.

The prior article in this series discussed much of the theory behind I/O performance tuning, especially as it related to Oracle 11gR2 database applications. This article will address several key practical concepts, including how to:

- Measure expected and actual I/O performance using established I/O monitoring tools

- Create pre-database and post-database I/O performance workloads

Let’s start off with a discussion of how to monitor all of the crucial components of any I/O subsystem via a plethora of available tools – some more sophisticated than others. I’m going to focus on the Linux operating system for this article series, but corollary utilities in most cases exist in the Windows OS environment and certainly exist in all UNIX OS environments.

I/O Performance Monitoring Tools

Most of the tools that Oracle DBAs continue to use for I/O performance monitoring offer the ability to reveal sufficient I/O tuning information from deep within the operating system. These tools break down into two basic groups:

Command-Line Tools. These reliable standard command-line tools have been around since the dawn of computing (well, at least since green characters were typed upon black backgrounds). Since they are character-based, however, they don’t offer much in the way of slick display; on the other hand, they tend to present a relatively small memory footprint – and that may be crucial when trying to analyze an I/O performance problem that’s masquerading as a memory-bound or CPU-bound issue. Here are a few of my favorites:

- Probably the best-known utility, iostat lets me view basic or extended statistics about the physical storage devices that my server is aware of. For example, I can instruct iostat to look at the number of transactions per second (TPS), the number of OS blocks read or written, and the rate at which they’re written (blocks per second). I can also tell iostat to constantly monitor specific devices for a specific number of repetitions. I’ve captured some samples of iostat execution in Listing 2.1.1.

- Likewise, the vmstat utility offers the capability to see total reads and writes to physical devices (via the –d option) and for a specific partition (via the –p option). A few samples of vmstat execution are shown in Listing 2.1.2.

- Though used less frequently, the sar utility (via the –b option) can also be used to dump historical information about transactions per second, reads and writes per second, and block reads and writes per second from the system architecture history files. I’ve shown results from a sample sar -b execution in Listing 2.1.3.

Oracle-Based Tools. Not surprisingly, the Oracle Database software itself also offers several I/O performance monitoring tools.

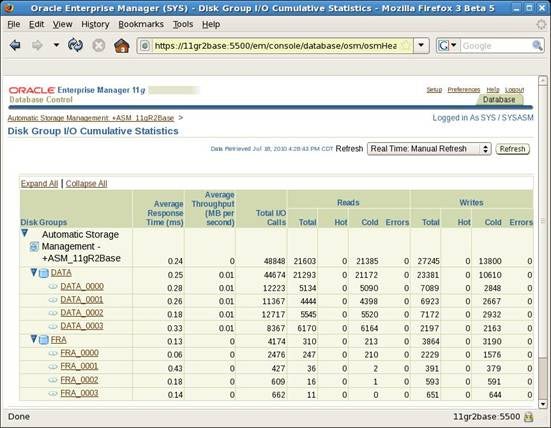

- Enterprise Manager (EM). ASM was first introduced in Oracle 10gR1. Concurrent with its release, Oracle DBAs were finally able to graphically view I/O activity and statistics with full drill-down capability to the ASM disk group and ASM disk level. Figure 2.1 below shows a summary screen from Oracle 11gR2 EM to show the level of I/O performance detail for ASM:

Figure 2.1: Enterprise Manager ASM I/O Performance Details.

Starting in Oracle 11gR1, EM also offered the ability to view I/O statistics from several different perspectives, including within background and foreground process type, I/O type, and even resource consumer group. I’ve included a few sample screens below to show this 11g feature, and I’ll be using this extensively in future articles to investigate and drill down through real-time I/O performance metrics.

Figure 2.2: Enterprise Manager I/O Performance Statistics Within I/O Function.

Figure 2.3: Enterprise Manager I/O Performance Statistics Within I/O Type.

- Automatic Workload Repository (AWR) Reports. Also released in Oracle 10gR1, AWR reports offer the ability to see read and write I/O statistics at the tablespace and datafile level. And the same I/O profiling information present in Oracle 11gR2 EM is also captured within every AWR report in this release, as shown in this sample AWR report. (Note that AWR’s older and feebler brother, StatsPack, also offers similar but more limited views into tablespace and datafile I/O activity.)

- ASMCMD: iostat. Starting in Oracle 11gR2, the ASMCMD command-line utility offers the iostat subcommand for any ASM file systems that are available. Remember that ASMCMD must be invoked from a terminal session that’s logged as the owner of the Oracle 11gR2 Grid Infrastructure home (typically, the grid user account) – not as the owner of the Oracle database home. I’ve pulled together a few examples of how this information can be captured and displayed in Listing 2.2.

- SQL*Plus Queries. Since the inception of ASM in Oracle 10gR1, the Oracle ASM instance has always exposed all ASM-related information through several dynamic views. I’ve often written reports directly against these views to obtain the information I wanted in the format desired. I’ve included several queries in Listing 2.3.1 and corresponding sample output from these queries in Listing 2.3.2.

I/O Generation Tools: Extra-Database

Several tools are useful for what I like to call “extra-database” analysis. In other words, these tools can be used to generate an I/O workload from outside an Oracle database environment. However, these tools must be used carefully – and probably never within a production database environment! – because they literally could care less about the state of any extant Oracle database files.

- The venerable dd command can actually be used as a crude I/O load generation tool. It’s simple to use, comes free with Linux (and with most flavors of Unix). Note that while there is no such corollary tool as dd for the Windows OS, it’s possible to use Linux emulators like MKS Toolkit to provide similar functionality.

- vdbench is a free load generation tool originally created by Sun Microsystems that’s favored by many storage administrators and I/O performance gurus among the largest storage vendors (e.g., EMC, Hitachi, IBM, NetApp) for generating large, predictable, and sophisticated I/O workloads. It can also be run against either Windows-based, UNIX-based, or Linux-based “cooked” file systems, as well as “raw” file systems, and it also offers a rudimentary GUI for performance test execution. Best of all, it’s extremely well-documented. Vdbench also offers some excellent I/O performance reports and real-time performance monitoring capabilities. I’ll demonstrate some examples of using vdbench to generate extreme I/O workloads in future articles in this series.

- ORION. The Oracle Input/Output Numbers calibration tool is a rather bare-bones, freely-supplied set of tools available from Oracle Technology Network. ORION has been around for many years, and it supports every major OS and hardware environment that Oracle databases run on, including UNIX, Linux, and Windows. It’s also simple to install – on Linux, it’s just an executable file – and relatively easy to configure. The documentation provided is a bit sparse, but more than sufficient for setting up ORION simply and quickly.

By the way, in my opinion the documentation on ORION is just a little too subtle on what it does “under the covers” to provide a write-intensive workload. Here’s a snippet from the output that pops up when I invoked the 64-bit version of ORION from a terminal window:

[oracle@11gR2Base ~]$

./orion_x86_64

ORION: ORacle IO Numbers -- Version 11.1.0.7.0 Parse error: View help screen using ./orion_x86_64 -help. [oracle@11gR2Base ~]$ ./orion_x86_64 -help ORION: ORacle IO Numbers -- Version 11.1.0.7.0 ORION runs IO performance tests that model Oracle RDBMS IO workloads. It measures the performance of small (2-32K) IOs and large (128K+) IOs at various load levels. Each Orion data point is done at a specific mix of small and large IO loads sustained for a duration. Anywhere from a single data point to a two-dimensional array of data points can be tested by setting the right options. An Orion test consists of data points at various small and large IO load levels. These points can be represented as a two-dimensional matrix: Each column in the matrix represents a fixed small IO load. Each row represents a fixed large IO load. The first row is with no large IO load and the first column is with no small IO load. An Orion test can be a single point, a row, a column or the whole matrix. The 'run' parameter is the only mandatory parameter. Defaults are indicated for all other parameters. For additional information on the user interface, see the Orion User Guide. <testname> is a filename prefix. By default, it is "orion". It can be specified with the 'testname' parameter. <testname>.lun should contain a carriage-return-separated list of LUNs The output files for a test run are prefixed by <testname>_<date> where date is "yyyymmdd_hhmm". The output files are: <testname>_<date>_summary.txt - Summary of the input parameters along with min. small latency, max large MBPS and/or max. small IOPS. <testname>_<date>_mbps.csv - Performance results of large IOs in MBPS <testname>_<date>_iops.csv - Performance results of small IOs in IOPS <testname>_<date>_lat.csv - Latency of small IOs <testname>_<date>_tradeoff.csv - Shows large MBPS / small IOPS combinations that can be achieved at certain small latencies <testname>_trace.txt - Extended, unprocessed output WARNING: IF YOU ARE PERFORMING WRITE TESTS, BE PREPARED TO LOSE ANY DATA STORED ON THE LUNS. Mandatory parameters: run Type of workload to run (simple, normal, advanced, dss, oltp) simple - tests random 8K small IOs at various loads, then random 1M large IOs at various loads. normal - tests combinations of random 8K small IOs and random 1M large IOs advanced - run the workload specified by the user using optional parameters dss - run with random 1M large IOs at increasing loads to determine the maximum throughput oltp - run with random 8K small IOs at increasing loads to determine the maximum IOPS Optional parameters: testname Name of the test run num_disks Number of disks (physical spindles). Default is the number of LUNs in <testname>.lun size_small Size of small IOs (in KB) - default 8 size_large Size of large IOs (in KB) - default 1024 type Type of large IOs (rand, seq) - default rand rand - Random large IOs seq - Sequential streams of large IOs num_streamIO Number of concurrent IOs per stream (only if type is seq) - default 4 simulate Orion tests on a virtual volume formed by combining the provided volumes in one of these ways (default concat): concat - A serial concatenation of the volumes raid0 - A RAID-0 mapping across the volumes write Percentage of writes (SEE WARNING ABOVE) - default 0 cache_size Size *IN MEGABYTES* of the array's cache. Unless this option is set to 0, Orion does a number of (unmeasured) random IO before each large sequential data point. This is done in order to fill up the array cache with random data. This way, the blocks from one data point do not result in cache hits for the next data point. Read tests are preceded with junk reads and write tests are preceded with junk writes. If specified, this 'cache warming' is done until cache_size worth of IO has been read or written. Default behavior: fill up cache for 2 minutes before each data point. duration Duration of each data point (in seconds) - default 60 num_small Number of outstanding small IOs (only if matrix is point, col, or max) - no default num_large For random, number of outstanding large IOs. For sequential, number of streams (only if matrix is point, row, or max) - no default matrix An Orion test consists of data points at various small and large IO load levels. These points can be represented as a two-dimensional matrix: Each column in the matrix represents a fixed small IO load. Each row represents a fixed large IO load. The first row is with no large IO load and the first column is with no small IO load. An Orion test can be a single point, a row, a column or the whole matrix, depending on the matrix option setting below (default basic): basic - test the first row and the first column detailed - test the entire matrix point - test at load level num_small, num_large col - varying large IO load with num_small small IOs row - varying small IO load with num_large large IOs max - test varying loads up to num_small, num_large verbose Prints tracing information to standard output if set. Default -- not set Examples For a preliminary set of data -run simple For a basic set of data -run normal To evaluate storage for an OLTP database -run oltp To evaluate storage for a data warehouse -run dss To generate combinations of 32KB and 1MB reads to random locations: -run advanced -size_small 32 -size_large 1024 -type rand -matrix detailed To generate multiple sequential 1MB write streams, simulating 1MB RAID0 stripes -run advanced -simulate RAID0 -stripe 1024 -write 100 -type seq -matrix col -num_small 0

I’ll likewise demonstrate the use of ORION in future articles in this series, so please stay tuned.

I/O Generation Tools: Freeware

What if I need to capture and then regenerate a specific application workload? There are several tools available:

- The Transaction Processing Council (TPC) provides a series of scriptsthat can be used to construct and load an Oracle database of an intended size and application workload “intent” (e.g. OLTP, OLAP). Once a database of an appropriate type is created, TPC does provide extensive documentation for its numerous workloads, including its TPC-C (OLTP), TPC-E (Extended OLTP), and TPC-H (data warehousing / decision support) application database models. The TPC-E However, TPC only provides one tool that can be publicly downloaded for TPC-E application workload generation, and that needs to be compiled using Microsoft’s Visual Studio and C++. I’ll demonstrate how to set up these schemas in the very next article, and I’ll demonstrate the use of a similar load generation tool in future articles as well.

-

Swingbench is another popular freeware tool for load generation. Written by well-known author and blogger Dominic Giles, it also provides command-line as well as GUI interfaces for creating some pretty sophisticated workloads for Oracle 9i, 10g, and 11g databases. I’ll demonstrate Swingbench’s capabilities in a future article in this series.

I/O Generation Tools: Not-So-Freeware

What if I need to capture and then regenerate a specific application workload, and I have significant budget to spend doing that? While free tools are great, the old saying that “You get what you pay for” certainly holds true here … especially when support for a technical problem or special testing case warrants outside help. The good news here is that there are several tools available; here are three of my favorite ones:

-

Mercury Interactive originally developed the LoadRunner tool many years ago, and Hewlett Packard now owns it. It’s definitely the most mature tool in this group. LoadRunner actually captures application keystrokes and allows me to replay a workload using those keystrokes as a user profile, and then crank up multiple versions of that workload – say, 20 OLTP users, five DSS users, three OLAP users, and two DBAs – against an Oracle database. However, the cost for such a sophisticated tool can easily run to six figures; furthermore, it may not be the best way to capture and replay an I/O performance workload because what I really need to duplicate is the I/O profile generated by the SQL statements being executed against the database, not necessarily the exact application keystrokes.

-

Quest Software’s Benchmark Factory is one of the more sophisticated tools for generating I/O workloads I’ve encountered. I’ve used both the 30-day trial version (which is surprisingly robust!) as well as a fully-licensed version to generate extremely large I/O performance workloads. It also makes short work of creating and loading an initial TPC-C, TPC-H, or SPC-1 model database that conforms to official standards for correlative comparisons of I/O workloads between completely different I/O subsystem configurations. Benchmark Factory offers quite an intuitive GUI, allows extensive modifications to standard workloads if so desired, and features excellent reporting and graphing capabilities. Again, be aware that Benchmark Factory is anything but free, and its licensing costs – plus the hardware to run Benchmark Factory agents to generate the required I/O performance workloads! – can easily run into six-figure USD ranges.

-

Finally, I’d be remiss to ignore Oracle Database Replay (DBR). DBR does require additional Oracle software licensing, but it may well be worth evaluating as an alternative to both LoadRunner and Benchmark Factory in terms of overall cost. DBR was first available in Oracle 11gR1; I’ve written about it extensively in a prior three-part article series. DBR’s basic functionality hasn’t changed dramatically in Oracle 11gR2, with one notable exception: It’s now possible to scale up a query workload based on a supplied multiplier factor. (For obvious reasons, this wouldn’t work very well for DML statements!) I’ll leverage that capability in a future article within this series.

Next Steps

Now that I’ve covered some of the more common I/O load generation and performance testing tools, it’s time to actually tackle some real-world testing. The next article is this series will focus on how to:

- Implement an extra-database I/O performance test

- Measure the results of an extra-database I/O performance test

- Create and load sample TPC-C and TPC-H database schemas prior to intra-database I/O performance tests

References and Additional Reading

Before you proceed to experiment with any of these new features, I strongly suggest that you first look over the corresponding detailed Oracle documentation before trying them out for the first time. I’ve drawn upon the following Oracle Database 11g Release 2 documents for this article’s technical details:

E10881-02 Oracle Database 11gR2 New Features

E10595-05 Oracle Database 11gR2 Administrator’s Guide

E10713-03 Oracle Database 11gR2 Concepts

E10820-02 Oracle Database 11gR2 Reference

E10500-02 Oracle Database 11gR2 Storage Administrator’s Guide

E10577-05 Oracle Database 11gR2 PL/SQL Packages and Types Reference